Table of content

SHARE THIS ARTICLE

Is this blog hitting the mark?

Contact Us

Table of Content

- Key Takeaways

- What is AI in Software Testing?

- Impact of AI on Testing Workflows and Processes

- What Software Market Data Shows About AI in Testing

- Limitations of AI in Software Testing

- Final Thoughts

- FAQs

AI in software testing is often presented as a breakthrough. Faster automation, smarter test generation, and reduced manual effort.

But the reality is more nuanced.

While AI is accelerating parts of the testing lifecycle, it is not transforming testing in isolation. Many teams experimenting with AI still struggle to convert these capabilities into consistent, production-level outcomes.

In practice, AI in software testing is not about replacing traditional automation or human expertise. It is about introducing intelligence into how testing systems operate. AI helps analyze data, prioritize risks, and improve efficiency, but it still depends on structured frameworks, reliable test design, and engineering oversight.

There is also a growing gap between expectation and implementation. Many organizations see AI as a solution for faster testing, yet real-world adoption shows that its effectiveness varies depending on use case, system complexity, and integration maturity.

This creates an important shift in how testing should be approached.

AI is not a silver bullet. It is an enhancement layer that changes how automation works, how insights are generated, and how quality is measured.

This blog explores what AI in software testing actually means, where it delivers real value, where it falls short, and how organizations can integrate it into their testing strategy in a structured and sustainable way.

Related Read: Future of AI in software testing

Key Takeaways

- AI accelerates testing speed, but its real value lies in faster decision-making, not just faster execution.

- AI works as an intelligence layer on top of automation, not a replacement for structured testing practices.

- The biggest impact of AI is reducing time between execution, analysis, and release decisions.

- Faster defect detection, failure analysis, and reporting directly improve delivery velocity.

- AI adoption is growing rapidly, but most organizations are still in early or mid-maturity stages.

- Data quality and system integration are the biggest barriers to scaling AI in testing.

- AI performs best in stable, data-rich environments with strong automation foundations.

- Over-reliance on AI without human validation can lead to misleading insights and missed risks.

- AI shifts testing from activity-based execution to insight-driven quality engineering.

- High-performing teams use AI to run the right tests and make confident release decisions faster, not just to increase test volume.

What is AI in Software Testing?

AI in software testing refers to the use of machine learning, data analysis, and pattern recognition to enhance testing processes. Instead of relying only on predefined scripts, AI introduces the ability to learn from data and adapt to changes in application behavior.

Traditional testing approaches follow fixed rules and require manual updates when systems evolve. AI-enabled testing, on the other hand, analyzes historical test executions, defect patterns, and system behavior to support more adaptive and data-driven validation.

From a quality perspective, AI helps shift testing from activity-based execution to insight-driven validation. It enables teams to focus on risk areas, understand failure patterns, and prioritize testing efforts based on actual system behavior rather than assumptions.

In practice, AI works by processing inputs such as test results, user interactions, and failure trends. These insights can help identify high-risk areas, suggest test scenarios, and improve how testing efforts are prioritized across the lifecycle.

However, AI does not replace structured testing practices or human expertise. It functions as an intelligence layer that enhances existing frameworks, requiring reliable data, well-designed automation, and engineering oversight to ensure consistent and measurable quality outcomes.

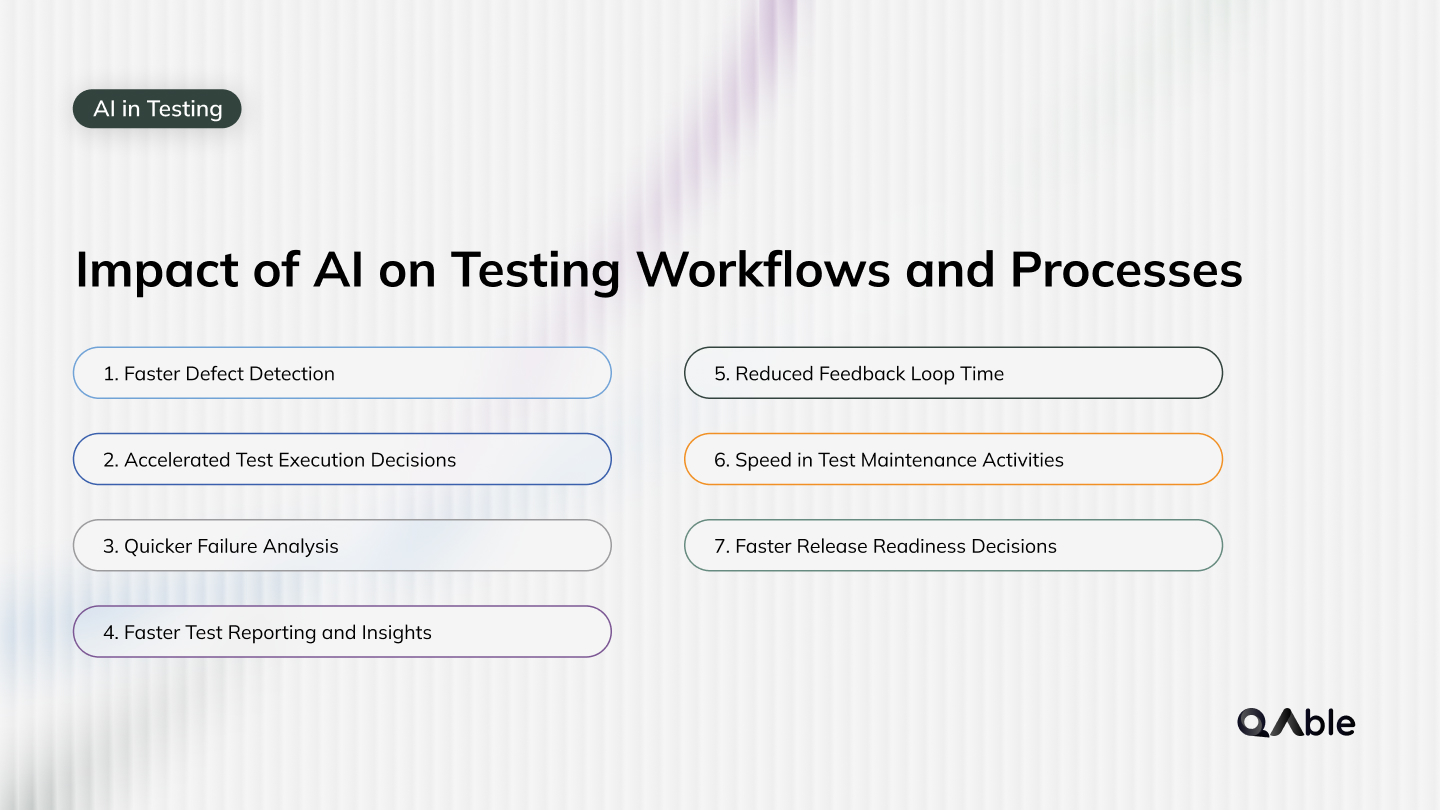

Impact of AI on Testing Workflows and Processes

AI impacts testing workflows by accelerating how quickly teams can detect, analyze, and act on quality signals. The real value shows up in how fast teams move from execution to insight.

1. Faster Defect Detection

AI helps identify failure patterns early instead of waiting for full test cycles to complete.

- Early anomaly detection

- Pattern-based bug identification

- Faster identification of high-risk flows

This reduces the time between code change and defect discovery.

2. Accelerated Test Execution Decisions

Instead of running complete suites, AI helps teams decide what to execute instantly.

- Selective regression runs

- Risk-based test triggering

- Elimination of low-value tests

This directly cuts down execution time without reducing coverage where it matters.

3. Quicker Failure Analysis

Debugging often consumes more time than execution itself.

AI reduces analysis time significantly.

- Auto-grouping of similar failures

- Flaky vs real failure detection

- Root cause signal suggestions

Teams spend less time investigating and more time fixing.

4. Faster Test Reporting and Insights

Traditional reports are static and require manual interpretation.

AI converts results into actionable insights instantly.

- Real-time execution insights

- Failure trend summaries

- Module-level risk visibility

This speeds up decision-making after every test cycle.

5. Reduced Feedback Loop Time

Feedback cycles define delivery speed.

AI compresses this loop by providing earlier and clearer signals.

- Immediate risk identification

- Faster validation of changes

- Continuous quality feedback

Developers get actionable feedback much earlier in the pipeline.

6. Speed in Test Maintenance Activities

Maintenance delays often slow down releases.

AI helps reduce turnaround time for fixing broken tests.

- Quicker identification of impacted scripts

- Faster adaptation to UI or flow changes

- Reduced manual debugging effort

This keeps automation suites usable even in fast-changing systems.

7. Faster Release Readiness Decisions

Release approvals are often delayed due to uncertainty.

AI provides quicker clarity on system stability.

- Confidence scoring for releases

- Risk-based go or no-go signals

- Visibility into unstable components

This helps teams move faster without compromising quality.

From a workflow perspective, AI reduces delays between execution, analysis, and decision-making. The real impact is not just faster testing, but faster understanding of quality.

Related Read: Is AI Really Improving Software Testing?

What Software Market Data Shows About AI in Testing

AI in software testing is not just a trend. Market data from leading research firms shows a clear shift toward AI-driven quality engineering, but also highlights a gap between adoption and real impact.

Source: Capgemini World Quality Report

1. AI Adoption in Testing is Already Mainstream

Industry reports show that AI is no longer experimental in QA.

- ~89% of organizations are exploring or implementing AI in quality engineering

- 37% already have AI in production workflows

- 52% are still in pilot or experimentation phases

This indicates that most teams have started the journey, but maturity levels vary significantly.

2. Enterprise-Scale Adoption is Still Limited

Despite high interest, very few organizations have fully scaled AI in testing.

- Only 15% of organizations have implemented AI at enterprise scale

This gap shows that moving from experimentation to real impact is still a major challenge.

3. AI is Directly Improving Automation Speed

AI is already influencing execution efficiency in testing workflows.

- 72% of organizations report faster test automation using AI

This reinforces that AI’s strongest immediate value is in speeding up automation and execution cycles.

4. AI is Redefining Quality Engineering, Not Just Testing

Market reports highlight a broader shift beyond testing.

- AI is embedding quality across the entire software lifecycle

- Teams are moving from testing phases to continuous quality engineering

This means testing is becoming part of a larger, integrated quality system.

5. Skill Gaps and Data Challenges are Slowing Adoption

Adoption is not just a tooling problem.

- 50% of organizations report lack of AI/ML skills

- 67% cite data privacy and data readiness challenges

- 64% struggle with integration into existing systems

These challenges explain why many AI initiatives do not scale effectively.

6. The Shift is Strategic, Not Just Technical

AI in testing is moving from experimentation to business alignment.

- Organizations are focusing more on ROI, governance, and measurable outcomes

- AI is being evaluated based on impact on release speed and quality outcomes

This reflects a transition from “trying AI” to “justifying AI”.

From a quality perspective, the data clearly shows that AI is accelerating testing workflows, especially in automation and execution speed. However, real value comes only when AI is aligned with strong testing foundations, clean data, and structured processes.

Related Read: Why Quality Assurance is a Must for Your Business?

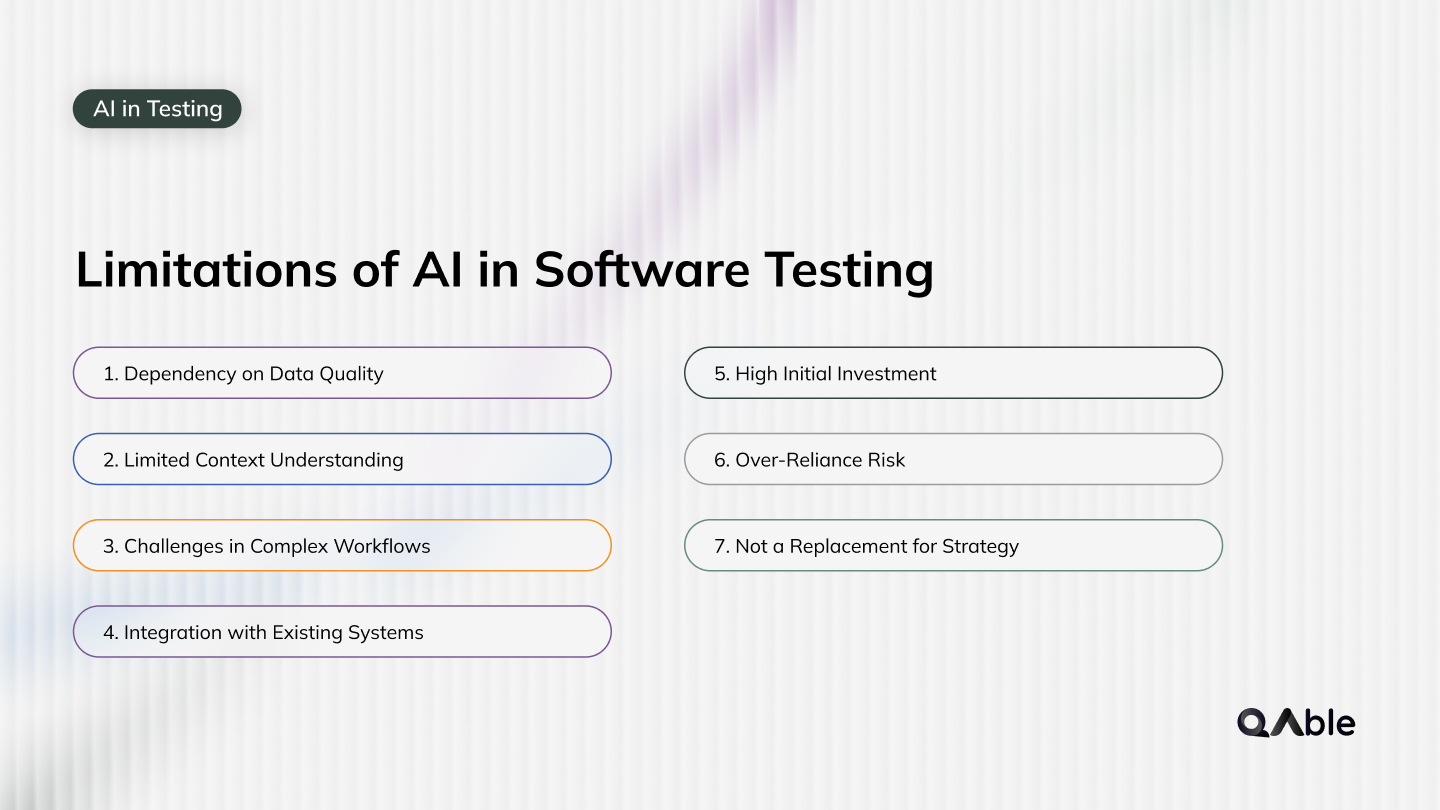

Limitations of AI in Software Testing

AI improves speed and decision-making in testing, but it is not a complete solution. Many teams overestimate its capabilities, which leads to unrealistic expectations and poor outcomes.

1. Dependency on Data Quality

AI systems rely heavily on historical data to generate insights.

- Incomplete test data

- Inconsistent execution history

- Noisy or flaky results

If the data is not reliable, AI outputs can be misleading and impact decision-making.

2. Limited Context Understanding

AI can identify patterns, but it does not fully understand product intent.

- Business logic interpretation

- User experience expectations

- Feature-level nuances

This makes human validation essential for ensuring correctness.

3. Challenges in Complex Workflows

AI struggles with highly dynamic or complex application flows.

- Multi-step user journeys

- Dynamic UI behavior

- Conditional workflows

These scenarios often require structured test design rather than pattern recognition.

4. Integration with Existing Systems

Introducing AI into established testing ecosystems is not always seamless.

- Compatibility with frameworks

- CI/CD integration challenges

- Data pipeline alignment

Without proper integration, AI can add complexity instead of efficiency.

5. High Initial Investment

AI adoption often requires upfront investment in tools, infrastructure, and training.

- Platform costs

- Setup effort

- Team upskilling

Organizations must evaluate whether the expected value justifies the cost.

6. Over-Reliance Risk

Relying too much on AI can reduce critical thinking in testing.

- Blind trust in AI outputs

- Reduced manual validation

- Missed edge cases

AI should support decisions, not replace engineering judgment.

7. Not a Replacement for Strategy

AI cannot define what should be tested or why.

- Test strategy design

- Coverage decisions

- Risk identification

These still depend on experienced QA engineers.

From a quality perspective, AI works best as a supporting layer within a structured testing ecosystem. Teams that treat AI as an enhancement rather than a replacement are more likely to achieve consistent and reliable outcomes.

Related Read: Top five software testing myths and facts you need to know

Final Thoughts

AI is changing how testing operates, not by replacing it, but by making it more intelligent, faster, and more focused. The real value of AI lies in how quickly teams can move from execution to insight, and from insight to action.

However, AI alone does not guarantee better quality. Without structured automation, reliable data, and clear testing strategies, AI can create noise instead of clarity. The impact comes from how well AI is integrated into existing workflows, not just from adopting new tools.

High-performing teams are not using AI to run more tests. They are using it to run the right tests, understand failures faster, and make confident release decisions.

At QAble, this approach is built around quality intelligence. By combining automation, data-driven insights, and engineering expertise, testing becomes a continuous feedback system rather than a one-time activity. This allows teams to improve both speed and quality without compromising either.

AI will continue to evolve, but the goal of testing remains the same. Deliver reliable software with confidence, clarity, and consistency.

Related Read: Measuring Test Automation's Business Impact

Discover More About QA Services

sales@qable.ioDelve deeper into the world of quality assurance (QA) services tailored to your industry needs. Have questions? We're here to listen and provide expert insights

Viral Patel is the Co-founder of QAble, delivering advanced test automation solutions with a focus on quality and speed. He specializes in modern frameworks like Playwright, Selenium, and Appium, helping teams accelerate testing and ensure flawless application performance.

.svg)

.webp)

.webp)

.png)

.png)

.png)

.png)

.png)

.png)

.jpg)

.jpg)

.jpg)

.webp)